How to Prevent Content Scraping before It Starts

Copying something you’re wearing or copying your new hairstyle is one thing, but no one likes to see someone copy something that took hours to complete. This can be infuriating, and it unfortunately happens quite often when it comes to online content. Not only can someone copy your articles, but he/she could actually rank higher on a SERP for that article than your original. This is beyond whatever you would call infuriating. Therefore, it is important that a blogger take all the necessary precautions to prevent a website from copying content.

The first thing that a blogger should do is check to see if his/her content is being copied in the first place. Although Google does have rules set in place about scraped, or copied, content, it is in the best interest of the blogger to find this content and get it taken care of as quickly as possible. You can do this through a few different methods:

- Copyscape – This is a service that allows you to type in the URL of your content and then find the websites where it has been copied. They have a free and premium version.

- Google Alerts – This works best if you don’t post new content very often. You can type in the title of your post in quotations, and then you will get an alert email if that title ever shows up on Google again.

- Webmaster Tools – This will help give you a list of websites that have links to pages on your site. If you see that one website is linking back to your site an unusually high amount (more than three or four times), you could have a content scraper on your hands. Learn more about using webmaster tools.

Once you have discovered if someone is scraping your content, you can take measures to make sure that that website removes the content. In many cases, simply finding the contact information of the website owner and asking them to take it down is enough. In other cases, you may have to go through Google and file a complaint. This is done by going to the Google DMCA page, answering a few questions to describe your situation, and then giving the URL to the original content and the scraped content.

How to Make Sure Your Content Doesn’t Get Scraped

Discovering if your content has been copied and then reporting the copied content is important, but most bloggers can actually stop the majority of content copying in the first place. If you take extra measures, you can make it much harder for a website to steal your hours of hard work. Consider some of the tips below:

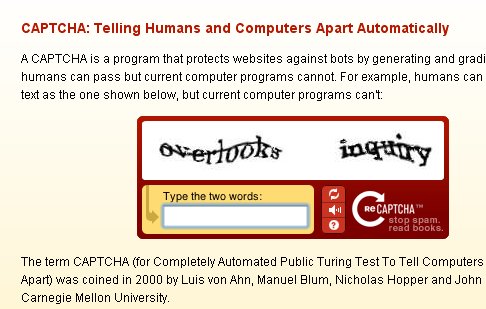

1. CAPTCHA – Oftentimes content is copied not by a person, but by a computer. A CAPTCHA will help make sure that your content cannot be scraped and it will help reduce the amount of spam found on your site. A CAPCHA usually asks someone to type in a few jumbled letters and numbers such as the example below. This will ensure that a person is on your site and not a bot. Although this won’t stop people from scraping your content, you would be surprised at how many less issues you have.

2. Pinging – You can actually let search engines know that your content has been uploaded before including it in an RSS feed. You can do this by using a Ping service, or a service that notifies a server that content has been uploaded. You can learn more about setting up this service here.

3. Canonical Links – You can add in the rel=”canonical” tag to help make sure that your website gets credit for all content scraped. Although it doesn’t stop the scrapers, it will at least help give you the credit you deserve. Google is also able to see this tag, so the site that is scraping content could get penalized. You can learn how to insert the tag here.

It is important to remember that content scraping isn’t always bad. If a website is giving you credit, this could actually help drive traffic to your website and help you gain visibility. Your content is being put in front of a new audience, and that audience may want to visit your site to read similar articles. Many websites even strike up a business proposal that is centered around republishing content. However, content scraping without proper attribution is something no blog owner wants to see. The first steps should be trying to avoid it in the first place by installing a CAPTCHA, pinging, or using canonical links.

What do you do to prevent content scraping? Have you found that this is a common problem for most blogs?

Image © ekaterinabondar – Fotolia.com

Fix ReplytoCom Links and Image Attachment Pages Issues in WordPress

Fix ReplytoCom Links and Image Attachment Pages Issues in WordPress How to Avoid Getting Banned by the Search Engines

How to Avoid Getting Banned by the Search Engines How to Manually Spin Articles for Better Quality SEO

How to Manually Spin Articles for Better Quality SEO Your Blog Sucks – The Ugly Truth about You and Your Precious Blog

Your Blog Sucks – The Ugly Truth about You and Your Precious Blog

{ 23 Responses }